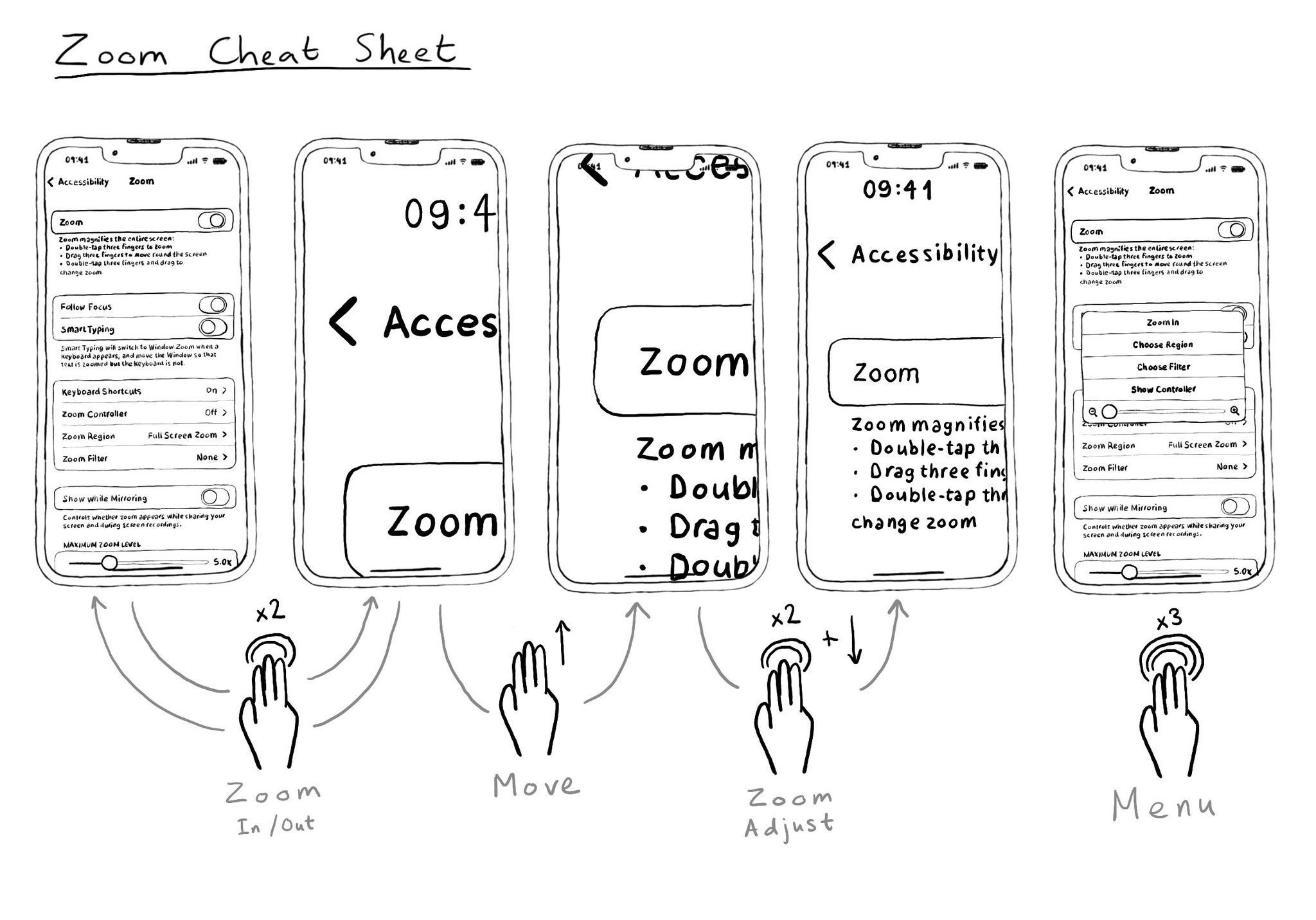

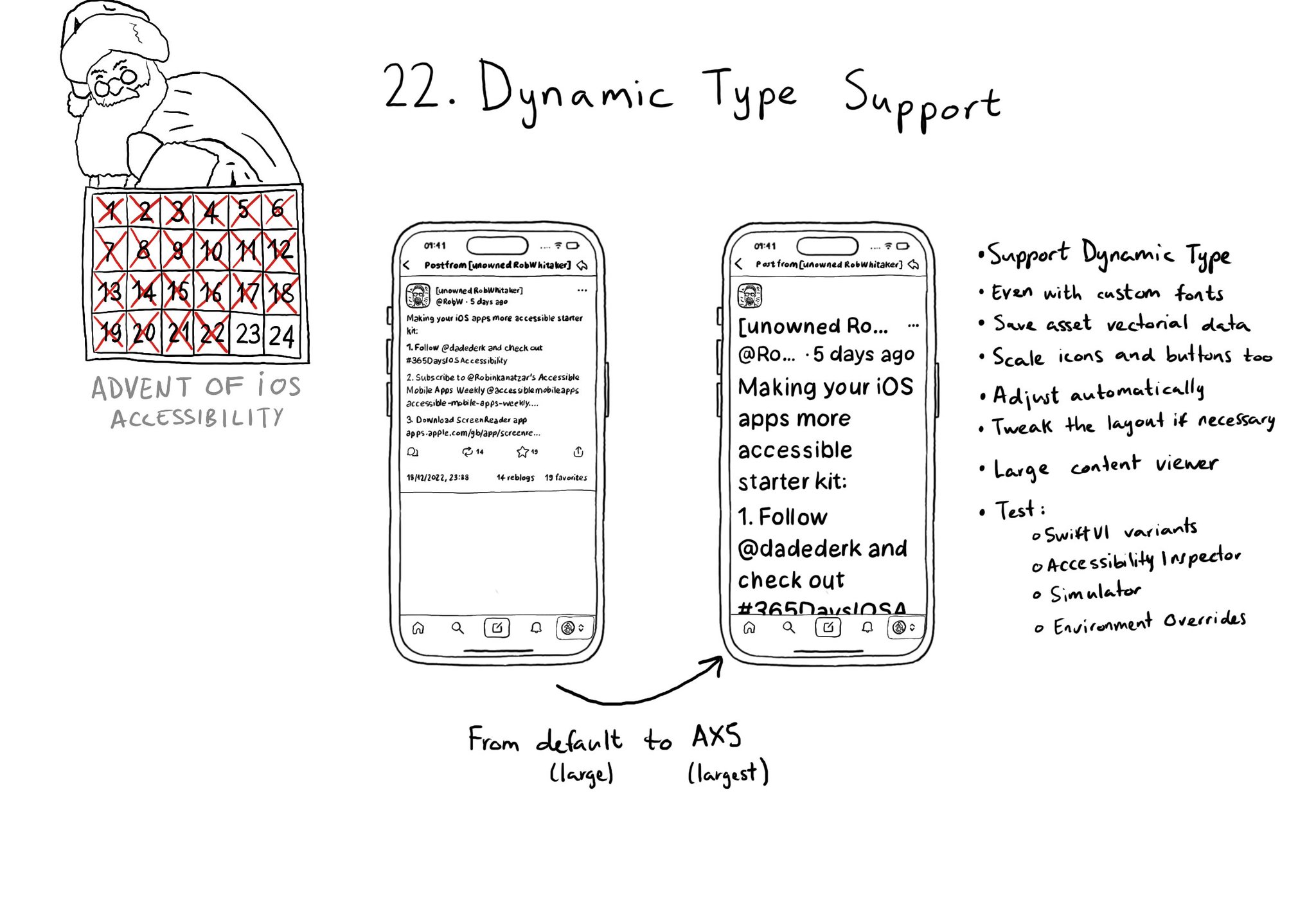

Zoom lets the user magnify the screen if the user needs to zoom in a region to be able to see any details a bit closer. It is useful to know the gestures that let you zoom in, back out, move around the screen, adjust zoom level or show its menu.

Zoom lets the user magnify the screen if the user needs to zoom in a region to be able to see any details a bit closer. It is useful to know the gestures that let you zoom in, back out, move around the screen, adjust zoom level or show its menu.

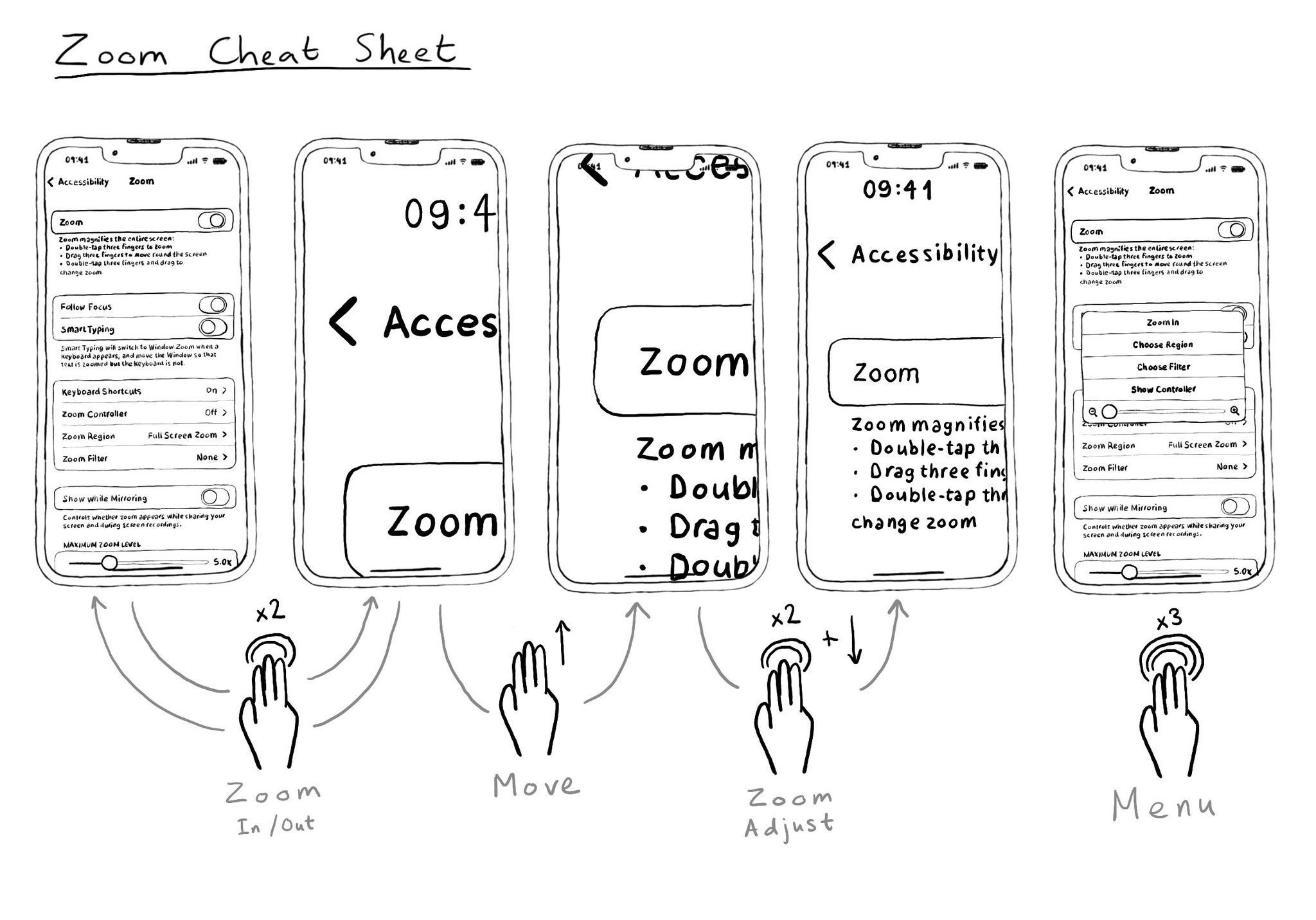

I used to think of Zoom as an accessibility feature that didn't need support from developers. But actually, testing with Zoom might unveil some issues and bad practices. Watch out for buttons that change something far away on the screen. Using a snackbar is usually not a good idea. Especially if it lets you do/undo something. Because they're ephemeral, they're difficult to spot and/or reach with Zoom, VoiceOver, Switch, Keyboard... Confirming a destructive action with a dialog might be better.

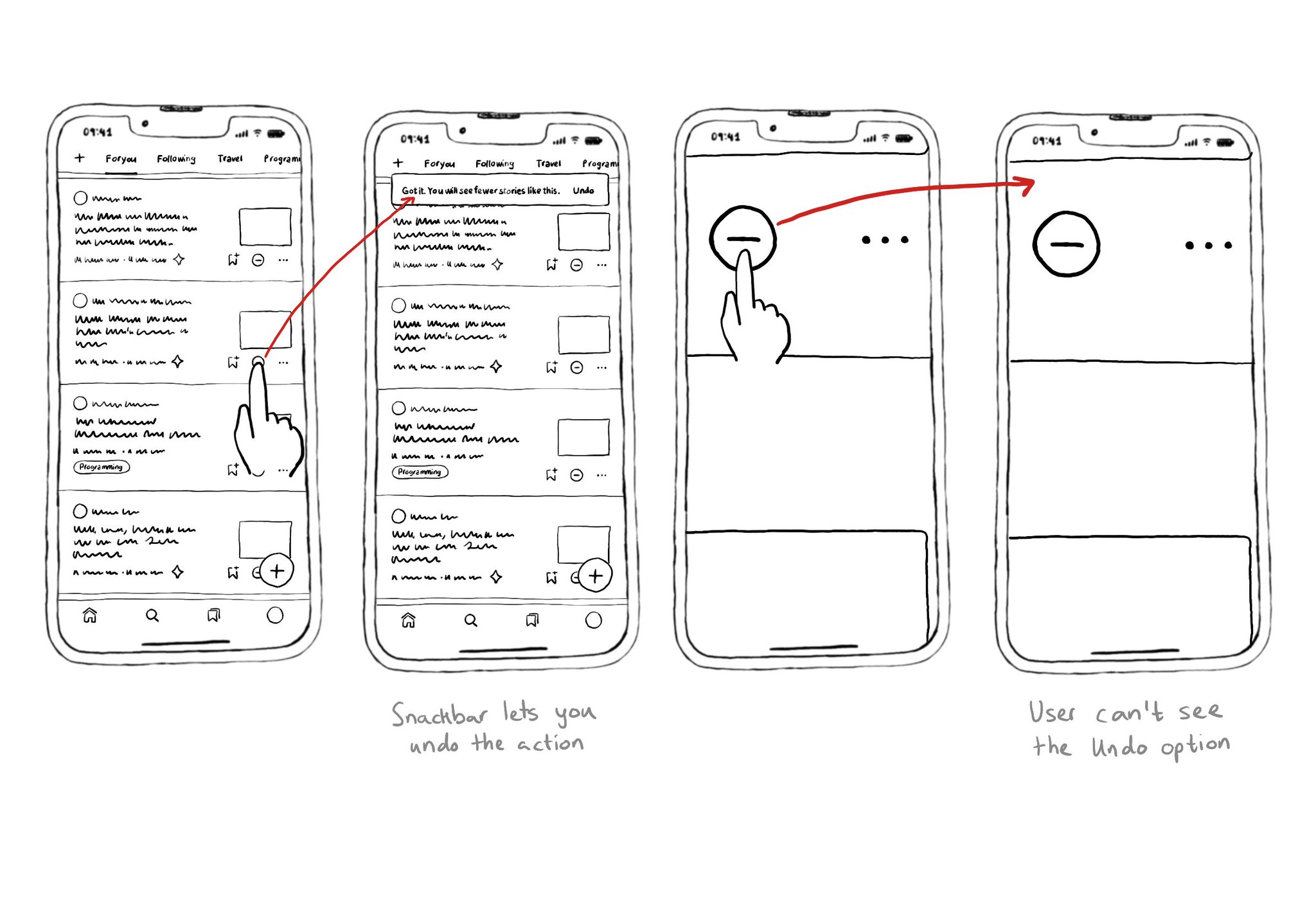

Make sure you support Dynamic Type up to the largest text size available. Take into account that there are five extra accessibility sizes available from the Accessibility Settings. It can make a huge difference for lots of users.

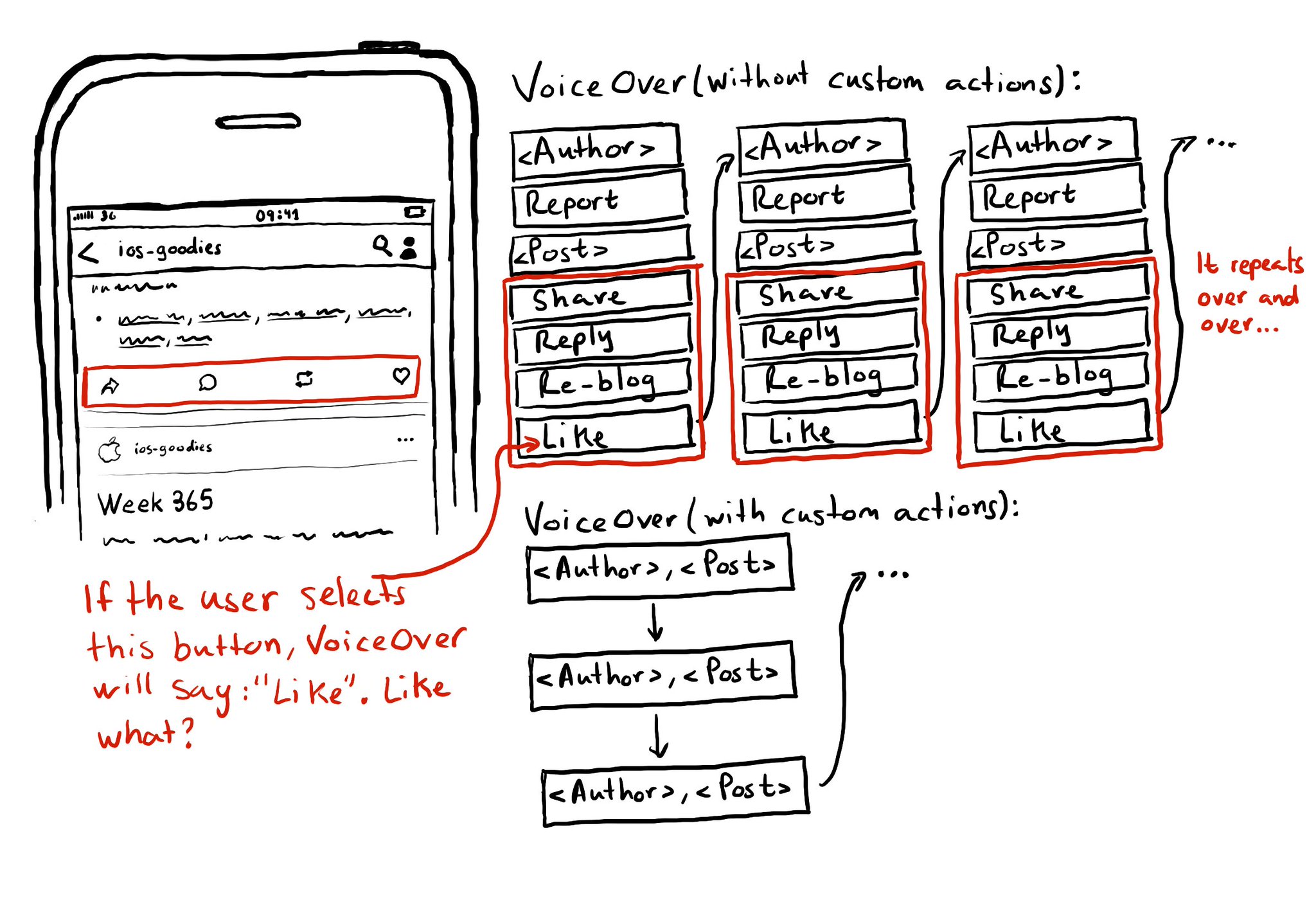

Potential benefits from grouping logical pieces of information and moving buttons to custom actions: reduce redundancy (by removing repetitive controls) and reduce cognitive load (by making easier to know what item will be affected by each action)

Content © Daniel Devesa Derksen-Staats on Accessibility up to 11! is licensed under CC BY 4.0. License details