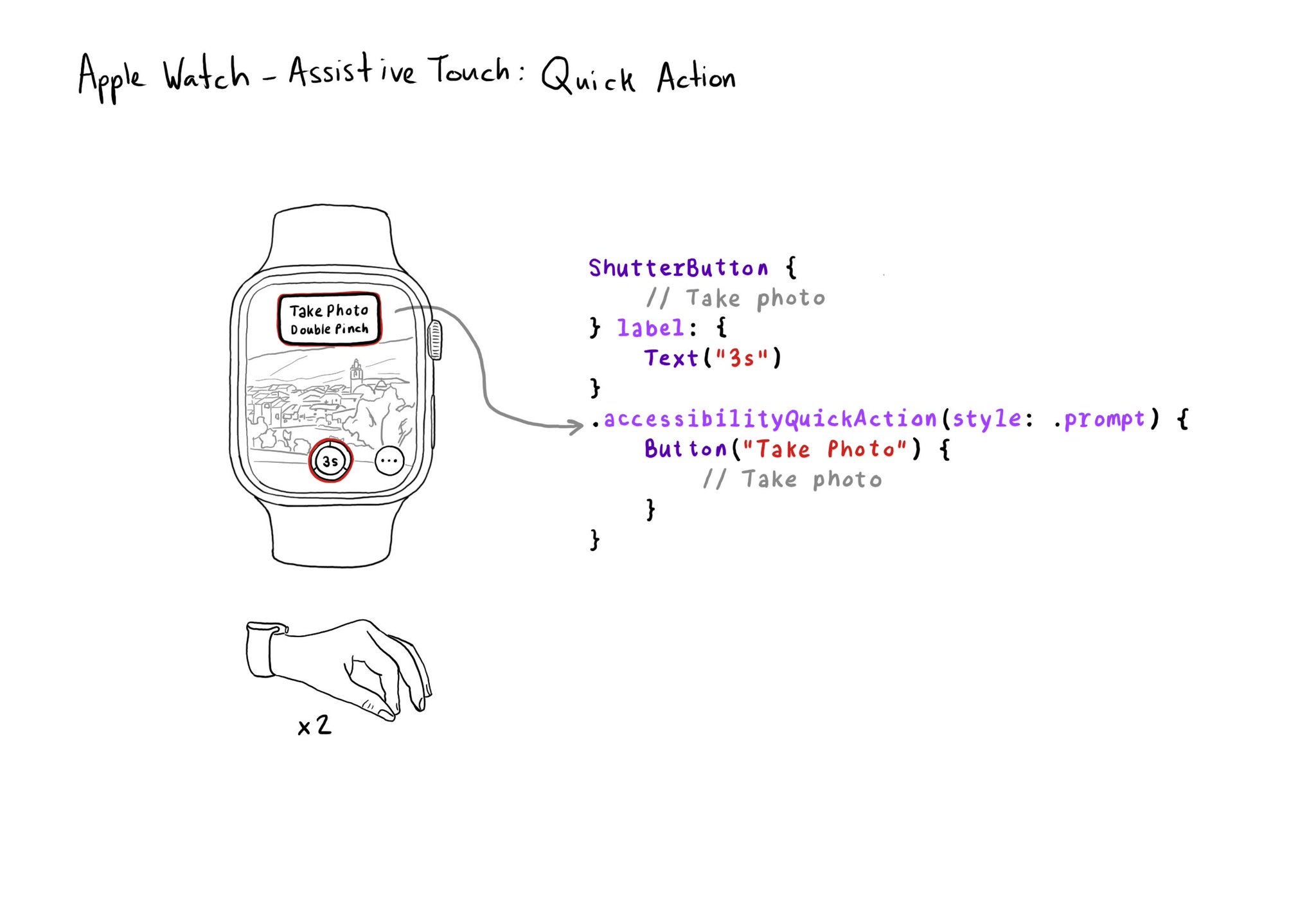

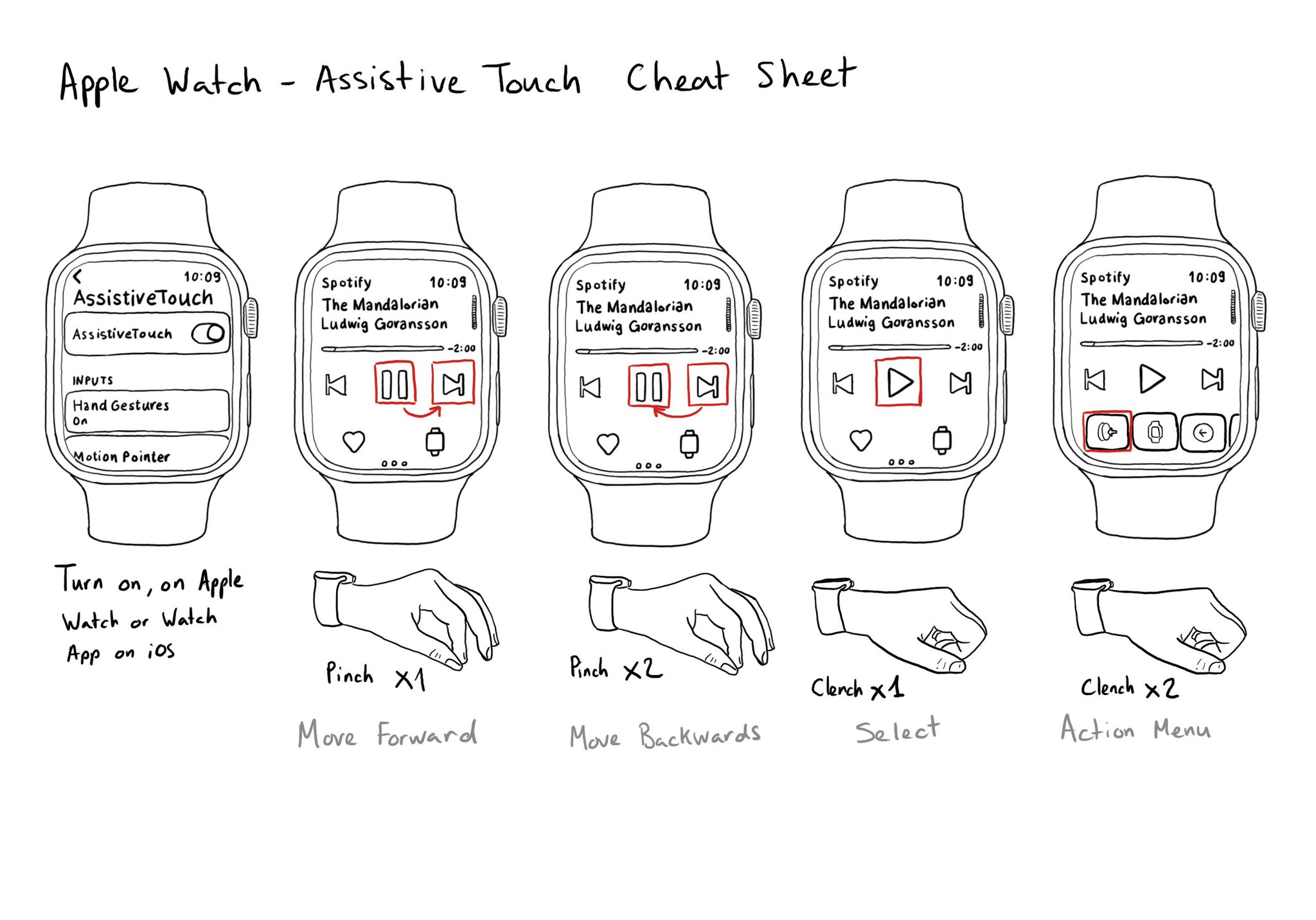

If your watch app has good VoiceOver support, chances are you'll also have good Assistive Touch support. But an improvement you can make is to implement a quick action (triggered with a double pinch) when there is a main action you can perform.

If your watch app has good VoiceOver support, chances are you'll also have good Assistive Touch support. But an improvement you can make is to implement a quick action (triggered with a double pinch) when there is a main action you can perform.

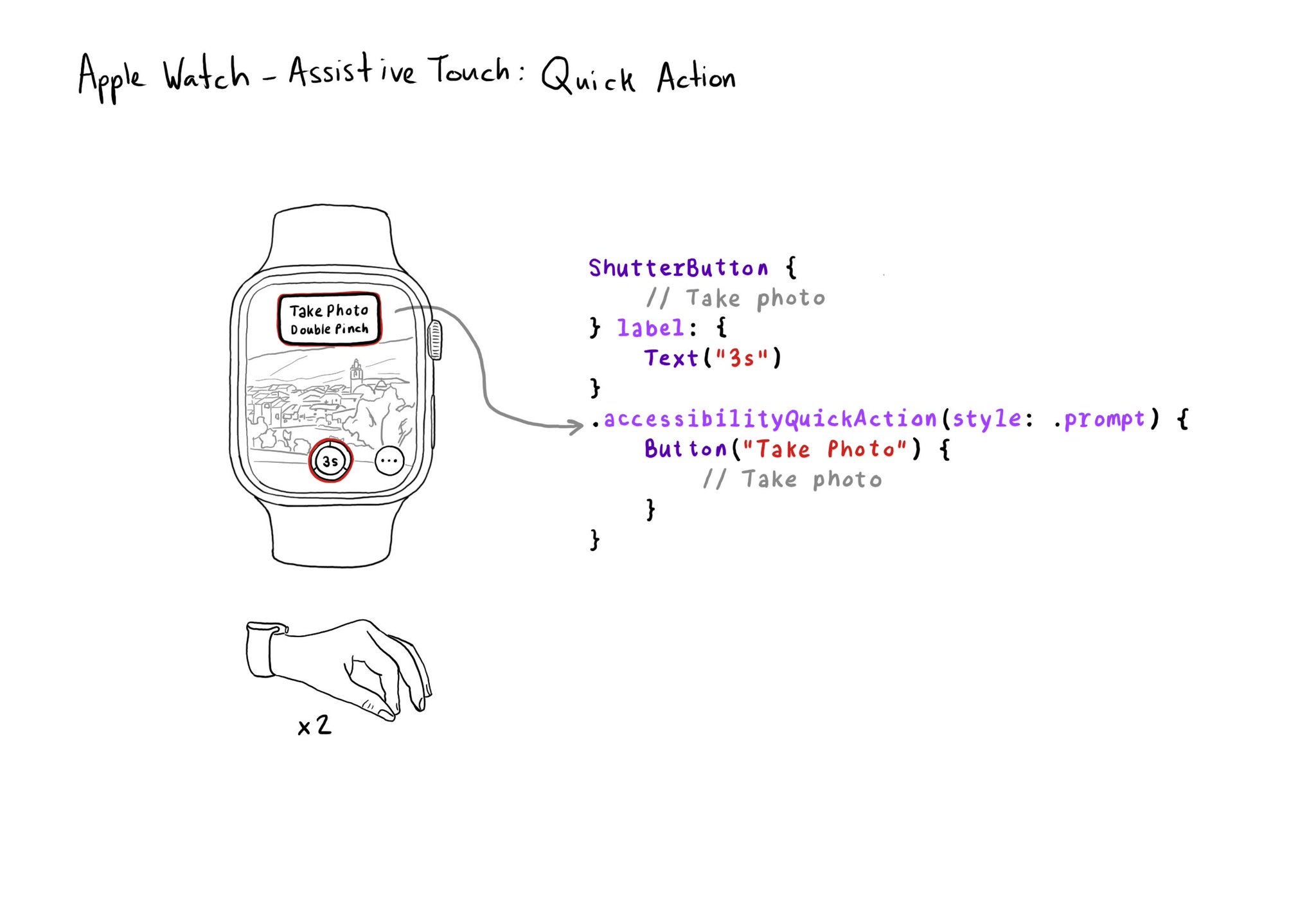

Assistive Touch for the Apple Watch works like magic. It lets you use your watch with gestures with the hand of the same arm you are wearing your watch on. No need to use your nose! If you don't have it on, is because you don't know about it.

The Accessibility APIs are generic and flexible. They're not just for VoiceOver. If you implement them right, you can do it once and it will very likely work great for VoiceOver, Voice Control, Switch Control, Full Keyboard Access, and more. That's why, to start with, we tend to focus on VoiceOver, the same way you may focus on keyboard navigation for the web. A great VoiceOver experience will get you most of the way to a good experience with the other assistive technologies. We've seen one example with Custom Actions. One implementation works for: VoiceOver: https://x.com/dadederk/status/1550099327053451266 Switch Control: https://x.com/dadederk/status/1551236244088279040 Full Keyboard Access: https://x.com/dadederk/status/1551874732504629249 And Voice Control: https://x.com/dadederk/status/1552253520182640645 Of course that doesn't mean you don't have to test and check how the experience is with the other technologies. But before feeling overwhelmed, or for small teams, making sure your app works for VoiceOver is a great start.

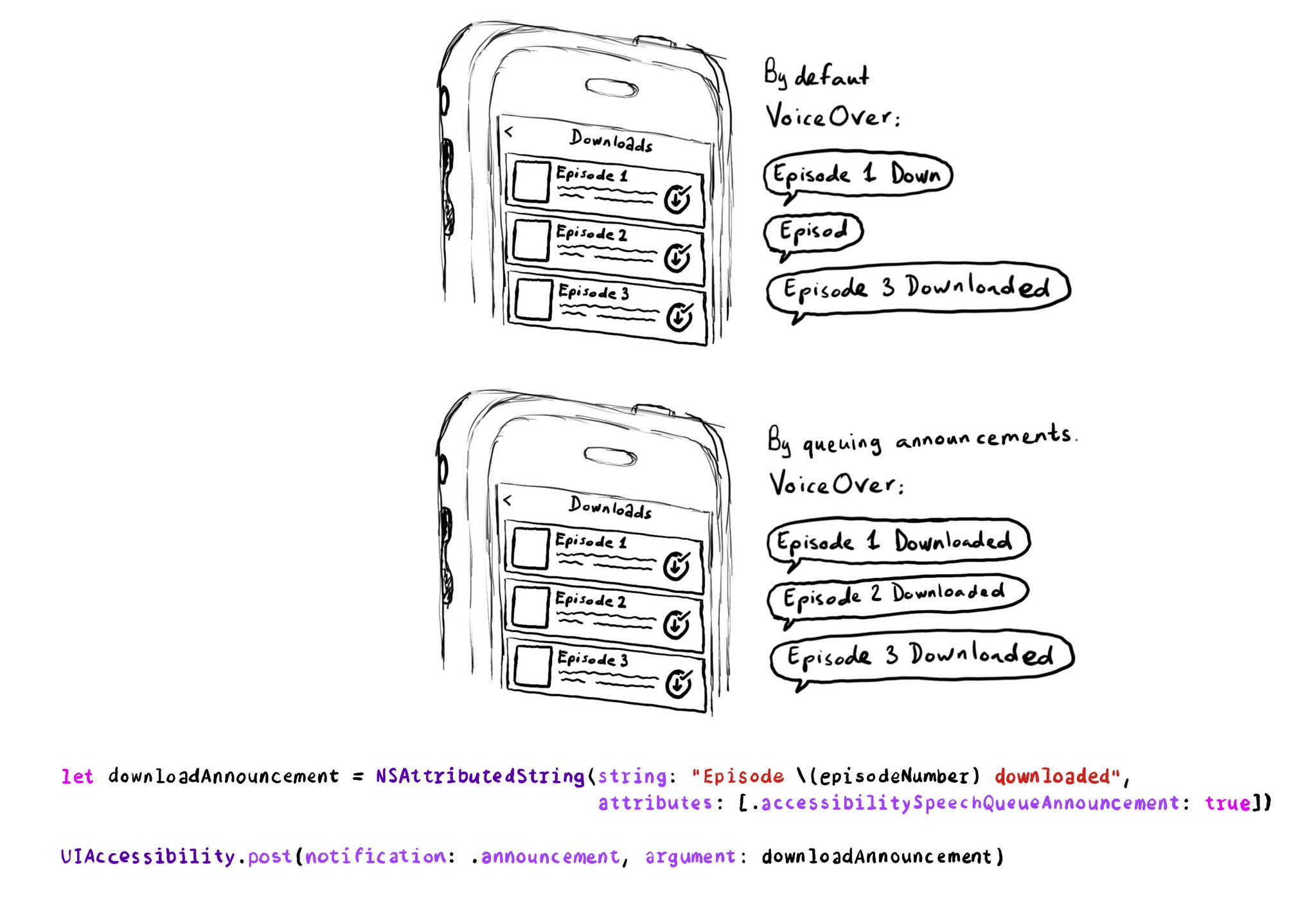

If you need to send announcement notifications that can step into each other, they will by default, interrupt ongoing announcements. But you can pass attributed strings as parameters too, letting you specify announcements to be queued.

Content © Daniel Devesa Derksen-Staats on Accessibility up to 11! is licensed under CC BY 4.0. License details