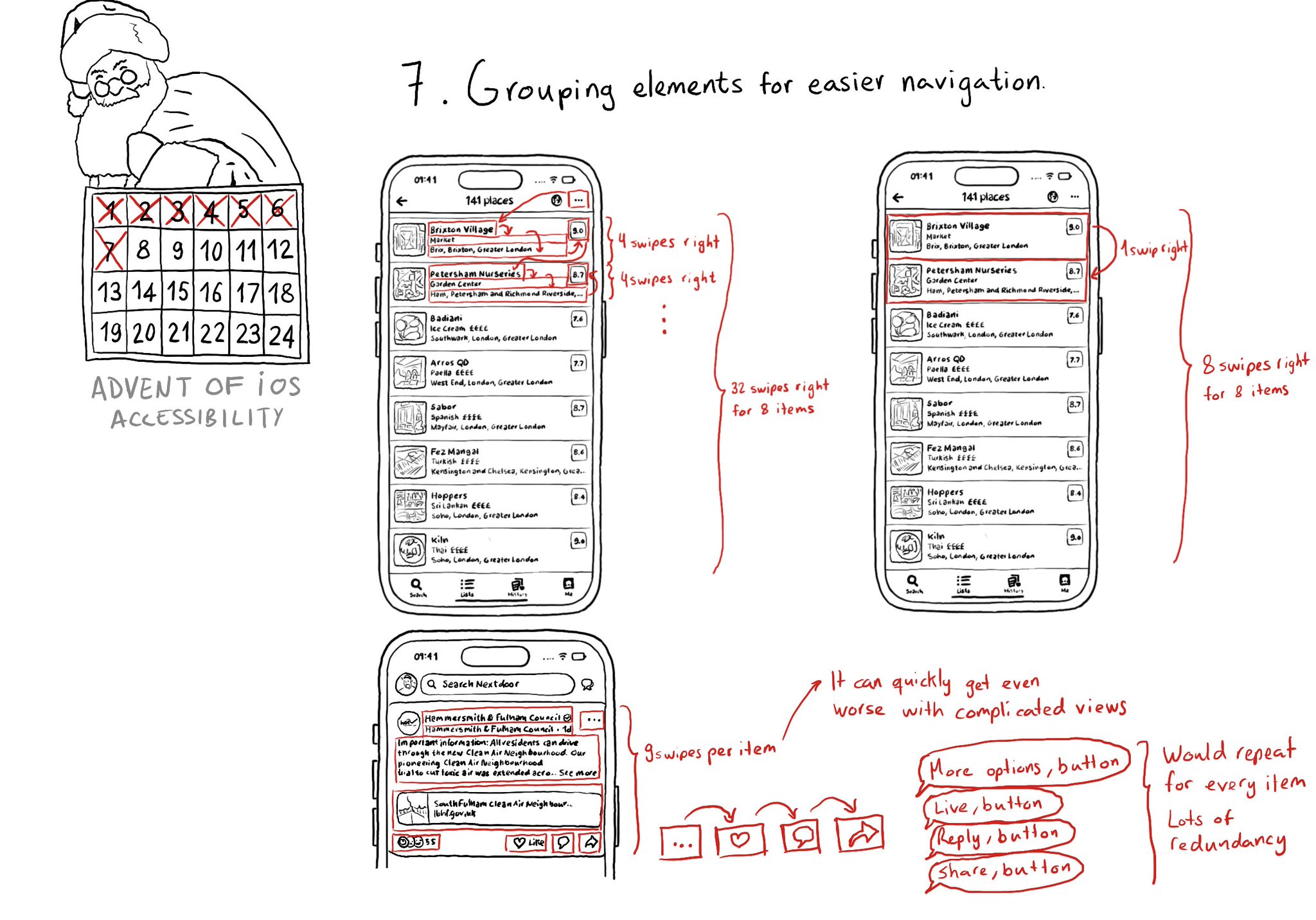

Grouping elements when it makes sense can make a huge impact on easing navigation with some assistive technologies like VoiceOver, Switch Control, or Full Keyboard Access. It also helps on reducing redundancy.

Grouping elements when it makes sense can make a huge impact on easing navigation with some assistive technologies like VoiceOver, Switch Control, or Full Keyboard Access. It also helps on reducing redundancy.

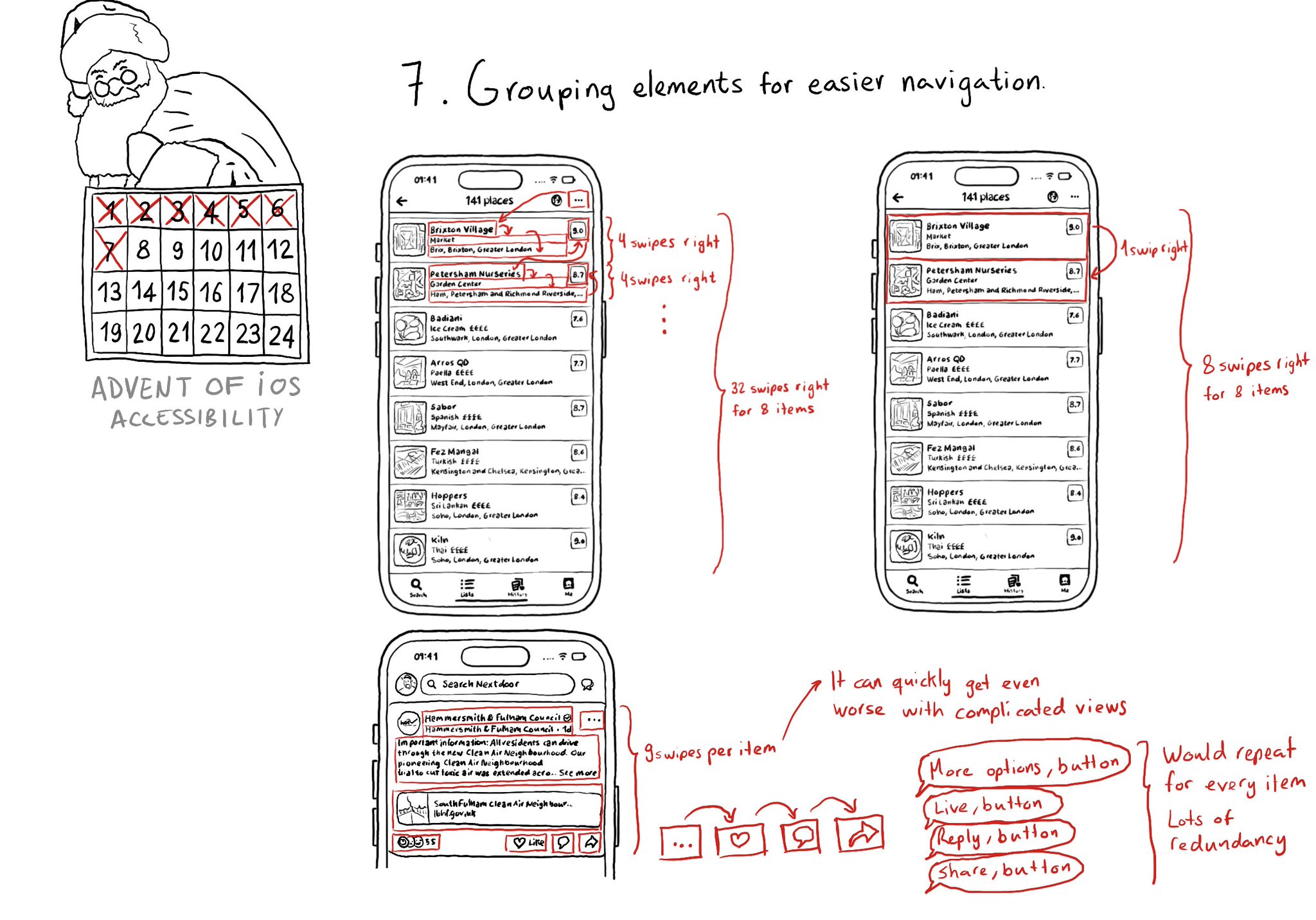

When something is focused with VoiceOver, if you double tap on the screen, it will be like interacting with the centre of the focused element. If you need to change that, you can customise the accessibilityActivationPoint. https://developer.apple.com/documentation/objectivec/nsobject-swift.class/accessibilityactivationpoint

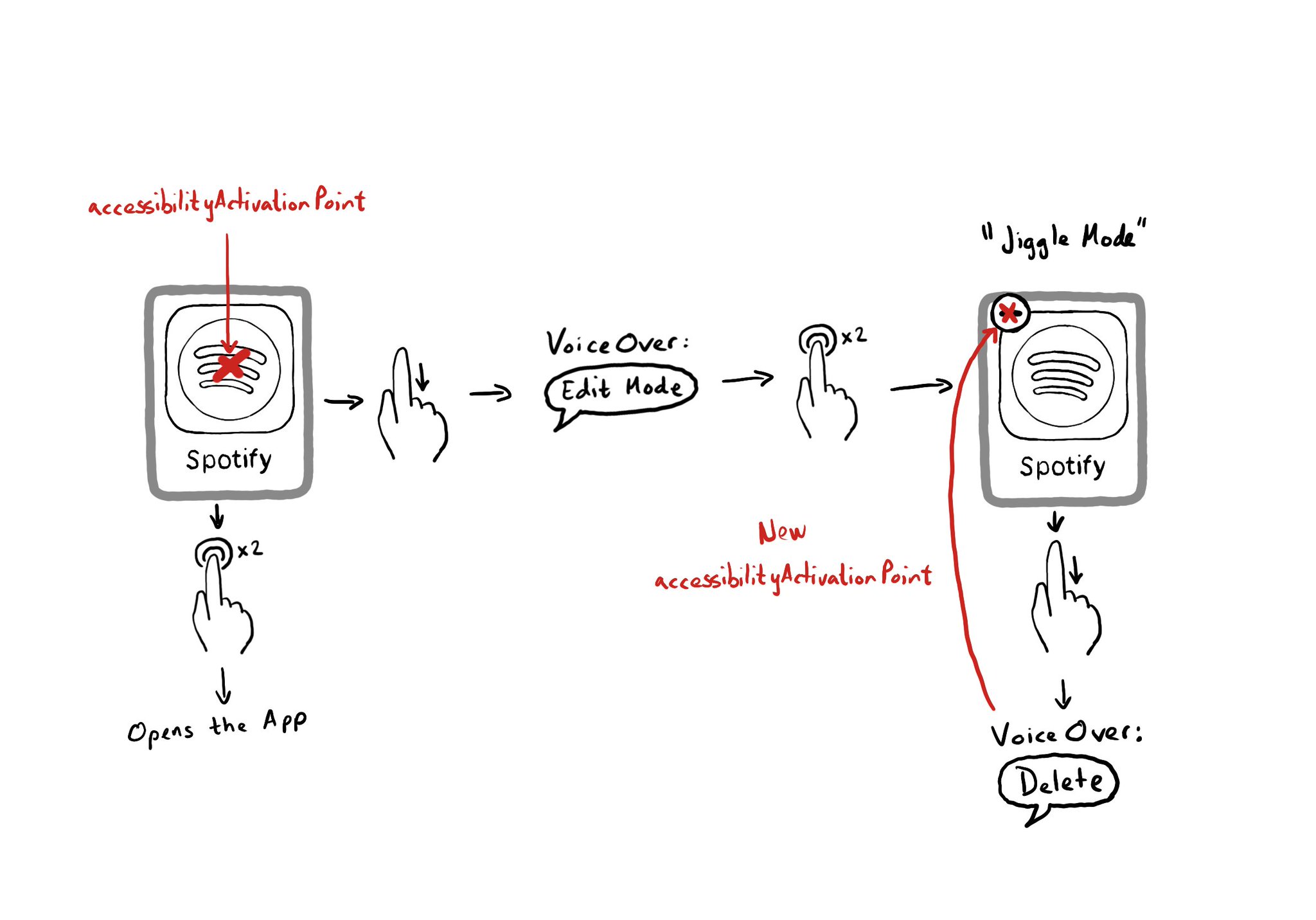

Accessibility Labels are not just for VoiceOver, and Accessibility User Input Labels are not just for Voice Control. The latter will also help Full Keyboard Access users to find elements on the screen by different names. Good API design!

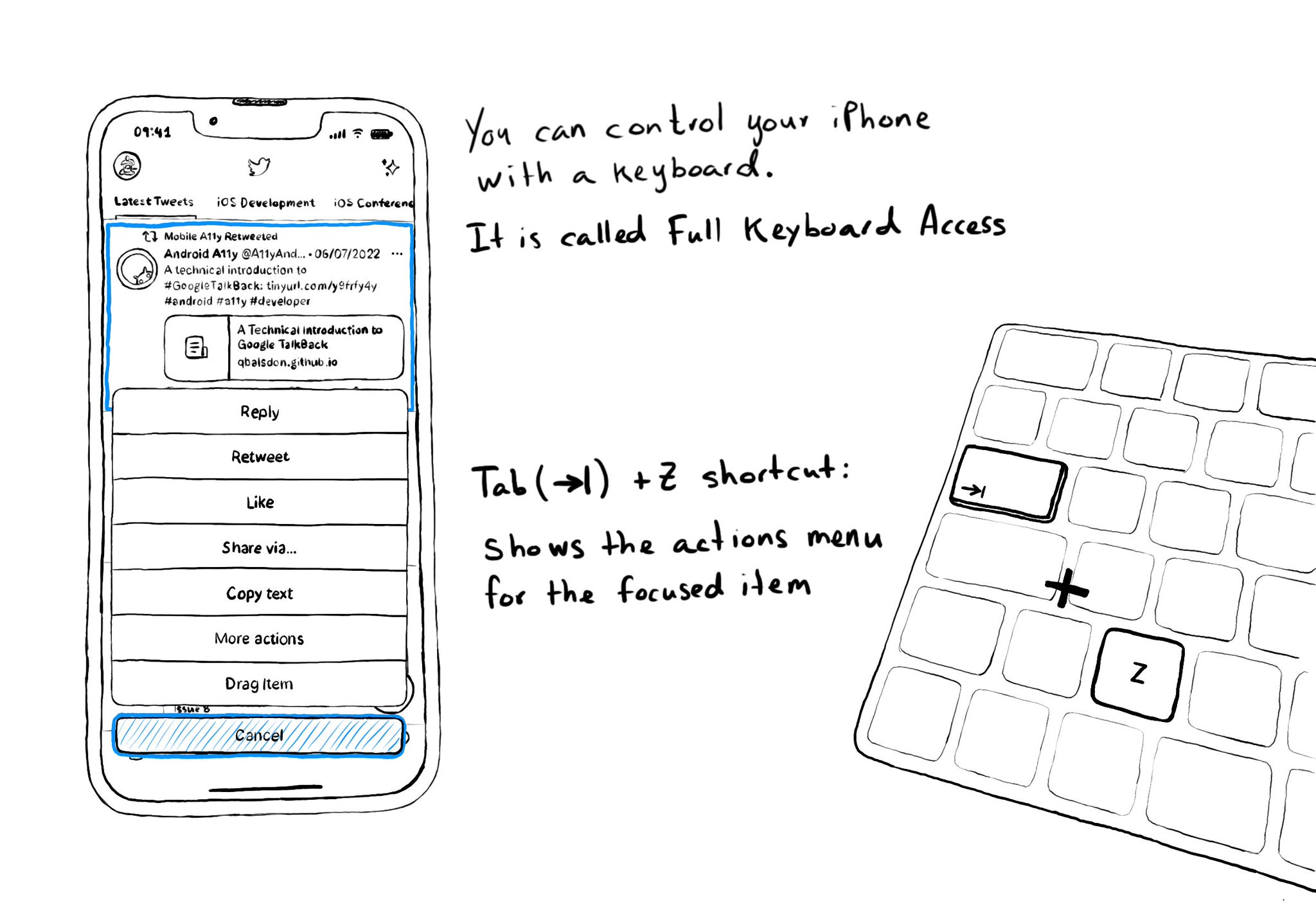

Custom actions work well with VoiceOver and Switch Control. It is also a way of speeding up navigation, and grouping all actions available for an item in a single place, with Full Keyboard Access. Focus an item and use the shortcut Tab (⇥) + Z.

Content © Daniel Devesa Derksen-Staats on Accessibility up to 11! is licensed under CC BY 4.0. License details